In school, I hand-wrote almost everything. Not out of principle — I just noticed that typing didn't stick the same way. Forming the words on the page wired the material into my brain in a way that staring at a screen never did. I almost never went back to read those notes. That was never the point. The writing was the studying.

Early career: switched to a laptop. Didn't work. Back to pen. Then somewhere along the way — I genuinely couldn't tell you when — that changed. Typing worked. My brain adapted.

I didn't know it at the time, but there's a name for what was happening. More on that in a second.

I've been reflecting on this a lot lately because I'm noticing that same inflection point again. Except this time, the stakes are different.

What You're Actually Handing Off

The prevailing model goes something like this: AI handles the tedious stuff — drafting, summarizing, structuring — so you can focus on what actually matters. Judgment. Strategy. The hard calls.

It sounds like efficiency. It feels like it. It's mostly wrong.

I'm currently deep in a project — AI-native from the start, better-documented than anything I've worked on in 20 years. Every decision logged. Every dependency mapped. And early on, rather than asking AI to synthesize it all and hand me the short version, I went through the structure manually. Checked whether the milestones actually reflected the work that was planned. Traced the dependencies to see if they were wired correctly or just named. Built my own simplified version to see if I could hold it in my head.

That felt slow. It felt like exactly the thing I was supposed to hand off.

And what I realized is that this was the same thing I'd been doing back in the day with those handwritten notes. The version of the work I was most tempted to skip was the version that was building my ability to lead the rest of it.

Better Outputs, Weaker Models

As it turns out, this phenomenon is a real thing, and it has a name:

The generation effect: your brain encodes information significantly better when it generates that information itself than when it simply reviews it. The effort isn't overhead — it's the actual process of learning.

When AI generates the artifact — the plan, the structure, the synthesis — it removes that friction. You get the output without the wiring. You can review it, approve it, even improve it. But you haven't built the internal model that makes you effective when the plan breaks and someone in the room needs to actually know the answer.

The effort is the point. The friction is doing something.

The research is starting to confirm what a lot of us probably already sense. Harvard Business Review recently reported that executives who used generative AI to help with forecasting became more confident in their predictions — and less accurate. The AI's authoritative tone produced a sense of assurance that wasn't earned. Fast Company put it more bluntly: AI is creating the first generation of cognitively outsourced humans.

The productivity numbers look great. The judgment numbers are a different conversation.

The Pep Talk You Can't Give Yourself Anymore

I carry some version of imposter syndrome. I'd bet a lot of people reading this do too — they just don't say so. The way I've always managed it is by falling back on what I actually know. That internal inventory: I've navigated this kind of problem before. I know how it tends to go sideways. I've been in the room when this decision went wrong, and I know exactly why.

That's the anchor. The foundation of actual knowledge you've earned by doing the work — not "fake it till you make it."

AI is eroding that anchor, and you may not notice until you reach for it.

If you've been moving fast, offloading the verification layer, reviewing rather than generating — you might reach for that inventory and find it lighter than you expected. Not because you've forgotten anything. Because you didn't encode it in the first place.

You're moving faster, and that's great. But in your gut, you're probably already noticing you're understanding less. And that gap has a way of throwing a spanner in the works at exactly the wrong moment.

The Rep You're Skipping

The fix isn't to stop using AI. That's not the argument.

The argument is about when in the process you bring it in.

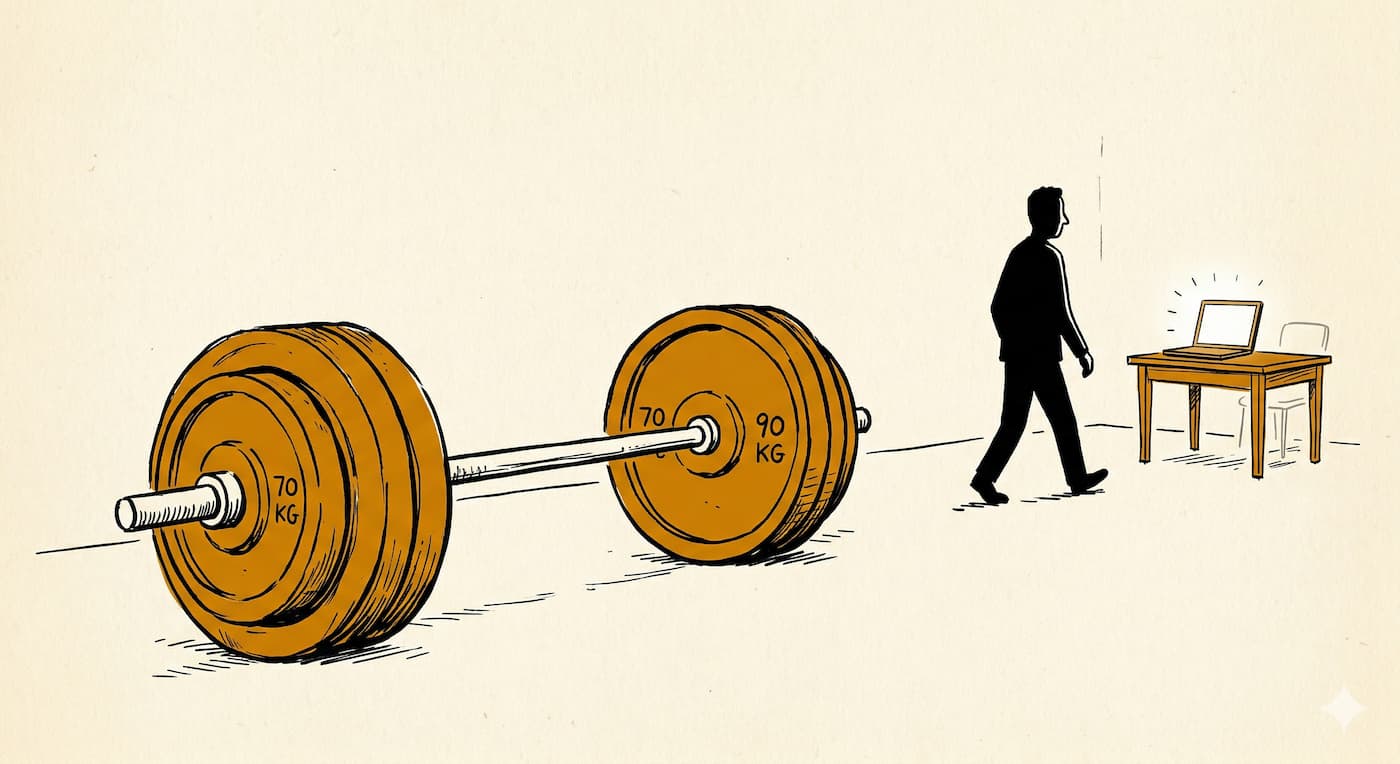

Think of it like skipping leg day. You can get away with it for a while. The rest of the workout looks fine. The deficit is invisible until it isn't — and by then you're mid-squat.

The generation effect has a practical implication: you have to engage before you offload. Form a point of view on the structure before asking AI to validate it. Trace the dependencies yourself before asking for a summary. Interrogate the plan critically before handing it off to be automated. Not because AI can't do those things — it often does them faster and cleaner than you would. But because doing them first is what builds the internal model that makes everything downstream better.

The discipline to insert that intellectual engagement step — before the offload, despite the fact that AI could just handle it — is a specific cognitive muscle. It has to be worked intentionally, or it atrophies.

The teams that figure this out will be the ones still standing when the model hallucinates, the plan breaks, and someone in the room actually needs to know the answer.

That person has to have done the work first.