Eight weeks. Fixed timeline, hard stop. By the time this project kicked off, an AI-generated backlog was already waiting — use cases mapped, tickets written, sprints laid out. The team could hit the ground running on day one without anyone needing to ask what they were building. The documentation existed. The structure was sound. Everything was in the system.

And that's exactly where the risk lives.

A backlog is not a mental model. Tickets are not understanding. A team that can work through a queue efficiently hasn't necessarily built any shared picture of the thing they're actually making. In an AI-generated project, that gap opens faster than most people expect — and it's subtle enough that you might not notice it until something goes sideways.

Access Isn't the Same Thing as Knowing

Last week I wrote about the generation effect — the cognitive science behind why your brain encodes information better when it generates that information itself, rather than reviewing something someone (or something) else produced. AI removes that friction. You get the artifact without building the internal model.

That post was mostly about what happens to you as an individual when you offload too much of the comprehension work. This week I want to pull the camera back.

What happens to the team?

Here's how it plays out: the backlog drops, the team starts executing, and because everyone has access to the information, it's easy to forget to check whether anyone has actually internalized it. The devs who want context will seek it out. The ones who prefer heads-down execution will build what's in front of them. Both are doing their jobs. Neither is wrong. And the shared mental model — the thing that makes good judgment calls possible — may never form at all.

Access is not understanding. In an AI-generated project, confusing the two is the default failure mode.

This isn't an execution problem. It's a decision problem. Execution is just where it shows up — when the edge case hits, when the sprint needs to be reordered, when someone has to make a call that isn't covered by a ticket.

We Still Needed a Map

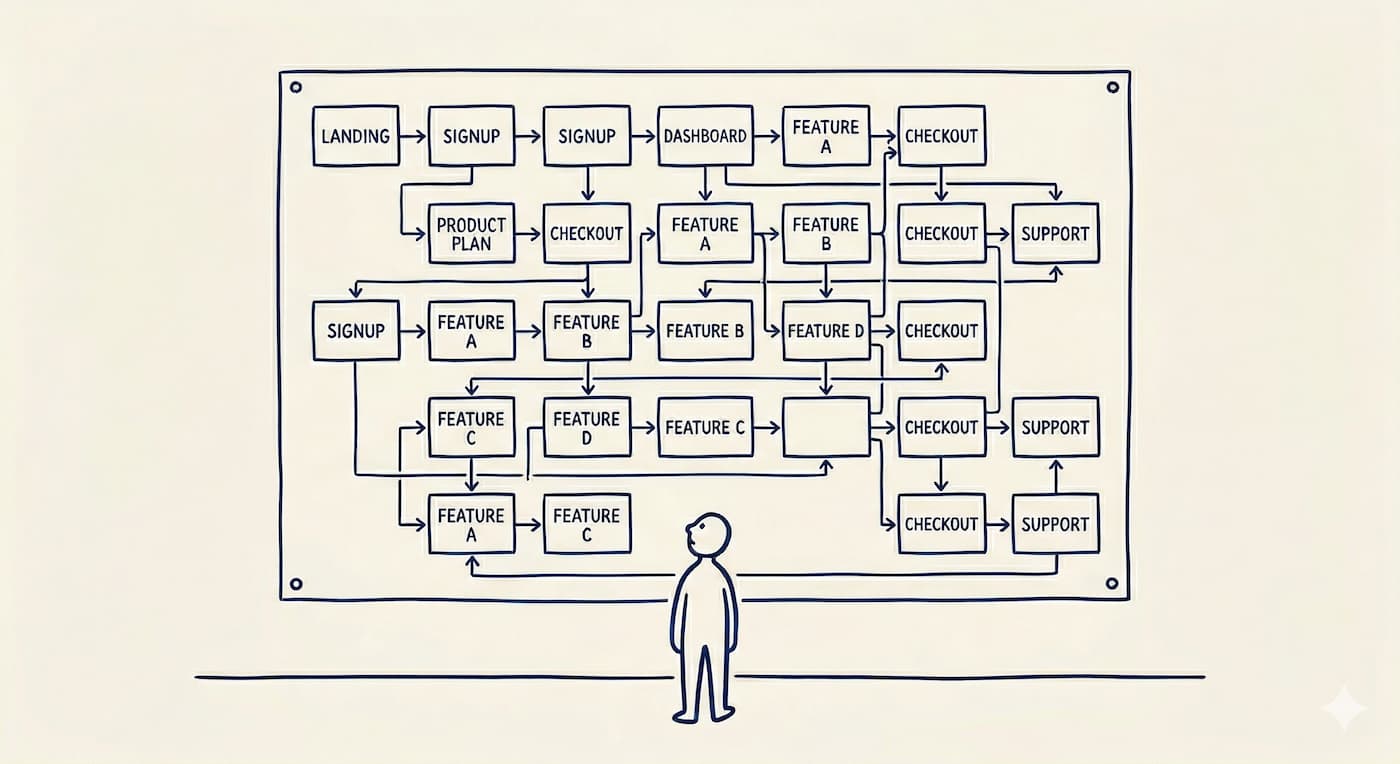

So this week I built one. Not a status tracker — though it also functions like one. A user journey diagram, AI-generated in a fraction of the time it would have taken manually, mapping the full product flow, linked to epics and sprints, and each module can be ticked off as we ship each piece.

The thing about a journey map is that you can't really read it without holding the larger flow in your head. To make sense of it, you have to understand why one box comes before another — what a completed auth flow implies about the payment flow downstream, what it means when a cluster of boxes lights up together versus ones that stay dark. The artifact requires internalization to interpret. That's not a side effect. That's the whole point.

When I shared it, one of the devs immediately tagged a teammate. No prompt. Just a tag. Small signal, but the right one. It means someone saw their work connecting to something larger than the ticket in front of them.

One data point. Too early to call it a win. But the right kind of response.

And here's the part that makes this a bit different from the old-school approach: I'm not manually maintaining this thing. I built an agent in Claude that updates the diagram as tickets close. The map stays current without me touching it. That's the actual unlock AI offers here — not just generating the artifact once, but keeping it alive without overhead.

The Questions Worth Sitting With

If a developer on your team makes a judgment call at the edge of a ticket — slightly out of scope, but clearly adjacent — do they have enough context to make a good one? Or are they working from the ticket alone?

If your sprint priorities shifted tomorrow, could you explain why the reorder makes sense in terms the team would find meaningful? Or would it feel like a PM moving boxes around for no visible reason?

If someone asked your team to describe what the product does — not the features, the product — how many different answers would you get?

And the one rarely asked at all: when you handed off the comprehension work to AI, who was supposed to re-internalize it on the other side?

In a pre-AI project, producing the artifacts was what built shared understanding. When AI generates the artifacts, that mechanism disappears. Something has to replace it.

When One Door Closes...

I want to close the loop here — because the last few weeks of this have risked feeling like a slow-motion warning about a problem with no solution.

The honest version is this: AI made some of the traditional mechanisms for building shared team understanding obsolete. Writing the spec together, arguing over a user story, watching someone trace a dependency graph by hand — inefficient, yes. But those activities were also how teams built mental models together. We traded that friction for velocity and mostly didn't notice what went with it.

But a door opened too. I can generate a user journey diagram for a full product in a fraction of the time it used to take. I can keep it current with an agent instead of a spreadsheet and a weekly ritual. I can create something that empowers the team to engage with the larger picture, rather than hoping they'll seek it out on their own.

You can't mandate internalization. But you can make the conditions for it hard to avoid — open every sprint with the map on screen, make it the reference point when edge cases surface, let the agent post an updated version every time something meaningful ships. The people who want context have an easy on-ramp. The ones who don't start to feel the gap when conversations happen around a map they haven't looked at.

The work is in recognizing which friction was doing something useful — and replacing it on purpose. Not recreating the old process for its own sake. Not offloading everything and hoping context diffuses through osmosis. Something intentional in between.

It's an adaptation. The teams that figure it out will have something the ticket-queue teams won't: people who know what they're building, not just what they're building next.

Last week's piece on the generation effect is here, and the one before it on what AI actually costs you cognitively is here. If any of this is landing — subscribe to Closing the Loop for weekly takes from the field.